Flaky Test Detection

Flaky Score is an AI-driven feature that evaluates the stability of test cases by analyzing their execution history. It indicates the likelihood of a test case behaving unpredictably.

Flaky test cases can disrupt testing pipelines and undermine confidence in test results. Traditionally, identifying such tests required manual comparison of execution results across multiple runs. With flaky score, this process is automated.

QA Managers can define and configure settings according to their specific testing processes for calculating the flaky score, ensuring its relevance to their testing methodologies.

For example, the following table shows the execution results of the test cases executed multiple times.

Note

The system calculates the Flaky Score only for the executed test cases. It is determined based on the latest number of executions. The Flaky Score ranges between 0 (Not Flaky) and 1 (Flaky).

Test Case Name | Test 1 | Test 2 | Test 3 | Test 4 | Test 5 | Test 6 | Test 7 | Test 8 | Flaky or Non-flaky? |

|---|---|---|---|---|---|---|---|---|---|

Test Case A | Pass | Pass | Pass | Pass | Pass | Pass | Pass | Pass | Non-flaky |

Test Case B | Fail | Fail | Fail | Fail | Fail | Fail | Fail | Fail | Non-flaky |

Test Case C | Pass | Pass | Fail | Fail | Pass | Fail | Pass | Fail | Flaky |

Note

The system considers only the final execution status of the test case.

The system calculates Flaky Score only for the test executions of the same project.

Filter Test Cases on Flaky Score

You can filter the test cases based on the Flaky Score. You can find the Flaky Score under the Advanced Filters.

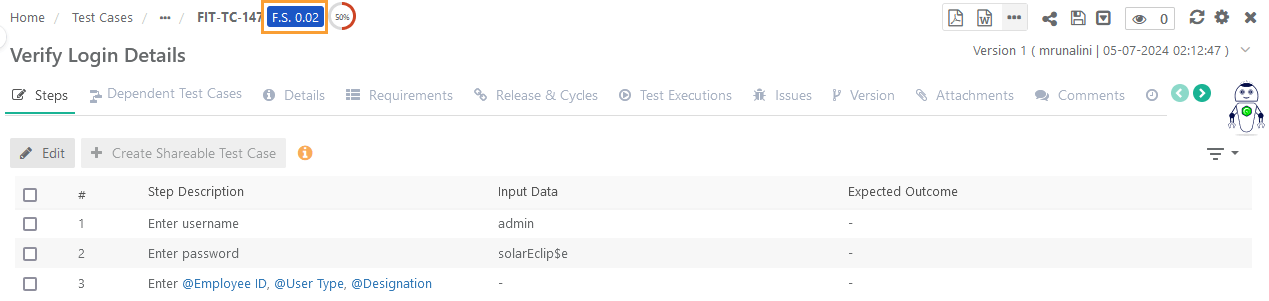

View Flaky Score on Test Case Detail Page

The generated Flaky Score is displayed beside the Test Case Key at the top of the screen.

|

View Flaky Score on Test Case Link Screens

You can show or hide the Flaky Score column on the Link Test Cases screen.

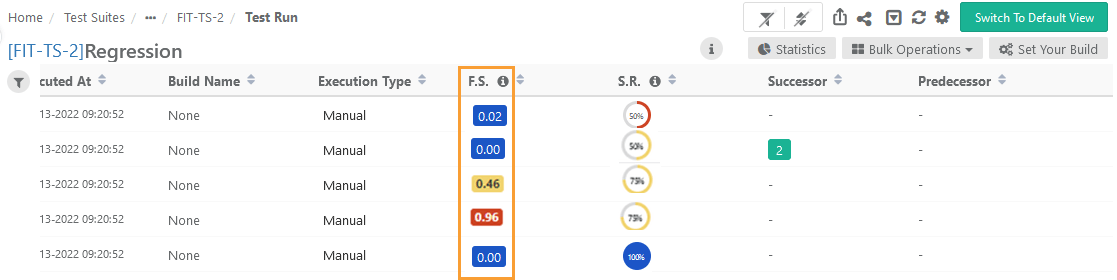

View Flaky Score on Test Execution Screen

According to the Flaky Score settings configured in the Configuration, the system calculates the Flaky Score. The flaky score indicates the tester's probability of risk while executing the test case and provides a means for comparing pre- and post-test execution results.

Go to the Test Execution screen.

Test Execution Detail View

|

If you go further into the Detail View, you can view the Flaky Score count at the top of the page.

Test Execution Default View

You can show or hide the Flaky Score column on the execution screen.

Click the Flaky Score to drill down to view the test executions and defects associated with the test case.